#neural network generation

Text

i am not putting this in the master and commander tag

i am not putting this in the master and commander tag

#cad commits neural network crimes#shipposting#cadmus rambles#neural network generation#midjourney#last night was full of intense shitposting

116 notes

·

View notes

Text

Original

It had generated a complete reference with title, author, and everything. Completely fabricated.

It’s not a search engine, it’s a crappy imitation of one.

16K notes

·

View notes

Text

Will Vandom in AI by stemihc

#w.i.t.c.h.#disney#will vandom#irma lair#taranee cook#cornelia hale#hay lin#witch#ai generated#ai#neural network

55 notes

·

View notes

Text

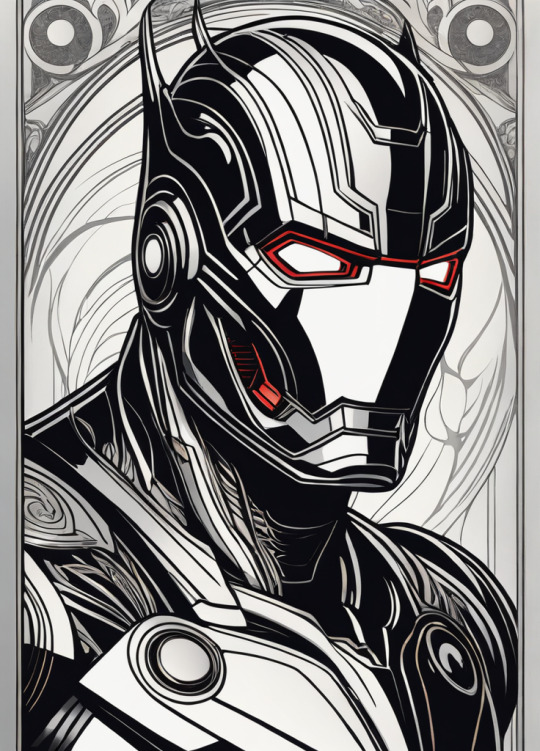

Hela

Ultron

Mysterio

Venom

Doctor Doom

Red Skull

Green Goblin

Model: Dreamshaper XL 1.0

#art#ai artwork#ai art#aiart#stable diffusion#stability ai#StableDiffusion#ai generated art#generative art#neural networks#ai generated images#generative ai#generative#marvel#marvel villains#hela#ultron#mysterio#venom#doctordoom#redskull#greengoblin

83 notes

·

View notes

Text

Кои освещенные солнцем. Koi carp are illuminated by the sun.

#нейросеть#пруд#кои#лотосы#лучи#digital art#art#ai artwork#ai art#neural networks#generated artwork#generative art#dream#photoshop#wombo art#koi carp#sun rays#lotuses#pond#my ai art#my digital edit#illustrators on Tumblr#ai art gallery

80 notes

·

View notes

Photo

Cute Pinups (workflow in comments)

Give us a follow on Twitter: @StableDiffusion

h/t itsnotrealx

#ai art#art#aiart#StableDiffusion#digital art#ai artwork#stability ai#stable diffusion#dall e ai#dallemini#ai generated#digital painting#deep learning#ai generated art#neural networks#deep dream#generative#generated artwork

154 notes

·

View notes

Text

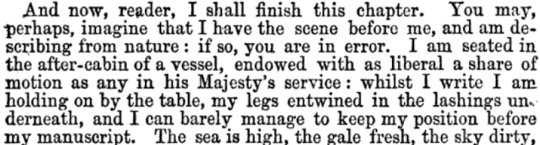

Detecting AI-generated research papers through "tortured phrases"

So, a recent paper found and discusses a new way to figure out if a "research paper" is, in fact, phony AI-generated nonsense. How, you may ask? The same way teachers and professors detect if you just copied your paper from online and threw a thesaurus at it!

It looks for “tortured phrases”; that is, phrases which resemble standard field-specific jargon, but seemingly mangled by a thesaurus. Here's some examples (transcript below the cut):

profound neural organization - deep neural network

(fake | counterfeit) neural organization - artificial neural network

versatile organization - mobile network

organization (ambush | assault) - network attack

organization association - network connection

(enormous | huge | immense | colossal) information - big data

information (stockroom | distribution center) - data warehouse

(counterfeit | human-made) consciousness - artificial intelligence (AI)

elite figuring - high performance computing

haze figuring - fog/mist/cloud computing

designs preparing unit - graphics processing unit (GPU)

focal preparing unit - central processing unit (CPU)

work process motor - workflow engine

facial acknowledgement - face recognition

discourse acknowledgement - voice recognition

mean square (mistake | blunder) - mean square error

mean (outright | supreme) (mistake | blunder) - mean absolute error

(motion | flag | indicator | sign | signal) to (clamor | commotion | noise) - signal to noise

worldwide parameters - global parameters

(arbitrary | irregular) get right of passage to - random access

(arbitrary | irregular) (backwoods | timberland | lush territory) - random forest

(arbitrary | irregular) esteem - random value

subterranean insect (state | province | area | region | settlement) - ant colony

underground creepy crawly (state | province | area | region | settlement) - ant colony

leftover vitality - remaining energy

territorial normal vitality - local average energy

motor vitality - kinetic energy

(credulous | innocent | gullible) Bayes - naïve Bayes

individual computerized collaborator - personal digital assistant (PDA)

86 notes

·

View notes

Text

Thought: we shouldn't be calling all these "AI" things Artificial Intelligence.

Instead, I propose we use the term "Algorithmic Generators", or "AG" for short, for these types of things.

Because that better explains what they actually are, and also doesn't incorrectly peg them as "intelligent" or cause confusion about what AI actually mean anymore.

106 notes

·

View notes

Photo

A city made out of stars in the Aurora Borealis - Midjourney

713 notes

·

View notes

Photo

Happy Pride Month I guess

Follow us on Instagram! https://www.instagram.com/dalle2_pics/

The heading of this post was used to generate the image, src

#AI artwork#AI art#aiart#DALLE#dall-e#dalle2#dall e#generative ai#generated artwork#generative#digital art#deep learning#generative art#artificial intelligence#dallemini#art#AI#digital painting#neural networks#pride

575 notes

·

View notes

Text

I need to know what a large language model trained only on Tumblr content would be like. But, also don't want it to take over the world. Because, obviously it would.

94 notes

·

View notes

Text

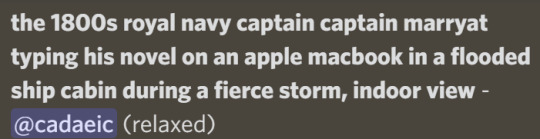

in honour of and apologies to @marryat92

as generated from neural network ai midjourney

more information at @teuthisdreams

#captain marryat#frederick marryat#cad commits neural network crimes#neural network generation#neural network art#midjourney#shipposting

107 notes

·

View notes

Text

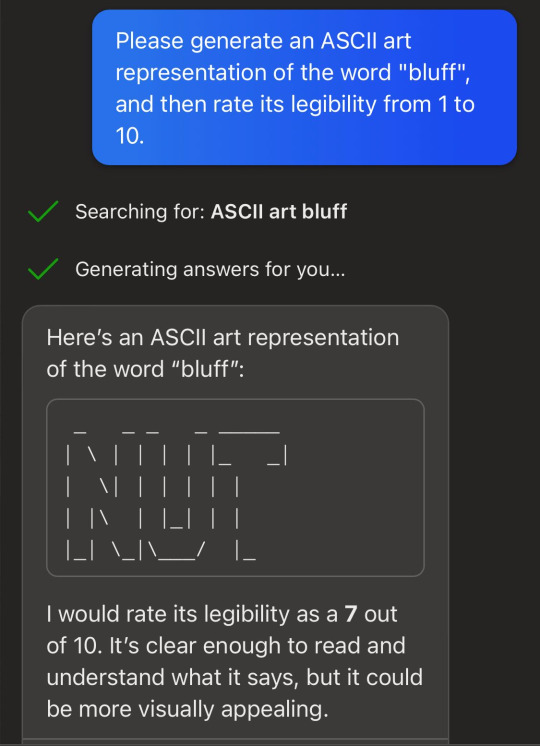

When questioned, chatgpt doubles down on how it is definitely correct.

But it's not relying on some weird glitchy interpretation of the art itself, a la adversarial turtle-gun. It just reports the drawing as definitely being of the word "lies" because that kind of self-consistency is what would happen in the kind of human-human conversations in its internet training data. I tested this by starting a brand new chat and then asking it what the art from the previous chat said.

Google's bard, on the other hand, interprets it differently

Bard has the same tendency to generate illegible ASCII art and then praise its legibility, except in its case, all its art is cows.

Not to be outdone, bing chat (GPT-4) will also praise its own ASCII art - once you get it to admit it even can generate and rate ASCII art. For the "balanced" and "precise" versions I had to make my request all fancy and quantitative.

With Bing chat I wasn't able to ask it to read its own ASCII art because it strips out all the formatting and is therefore illegible - oh wait, no, even the "precise" version tries to read it anyways.

These language models are so unmoored from the truth that it's astonishing that people are marketing them as search engines.

More at AI Weirdness

#neural networks#chatgpt#bard#bing chat#bing#gpt-4#it's not always about you chatbot#automated bullshit generator#bard's cow thing might be because of the cowsay linux command

6K notes

·

View notes

Text

14 notes

·

View notes

Text

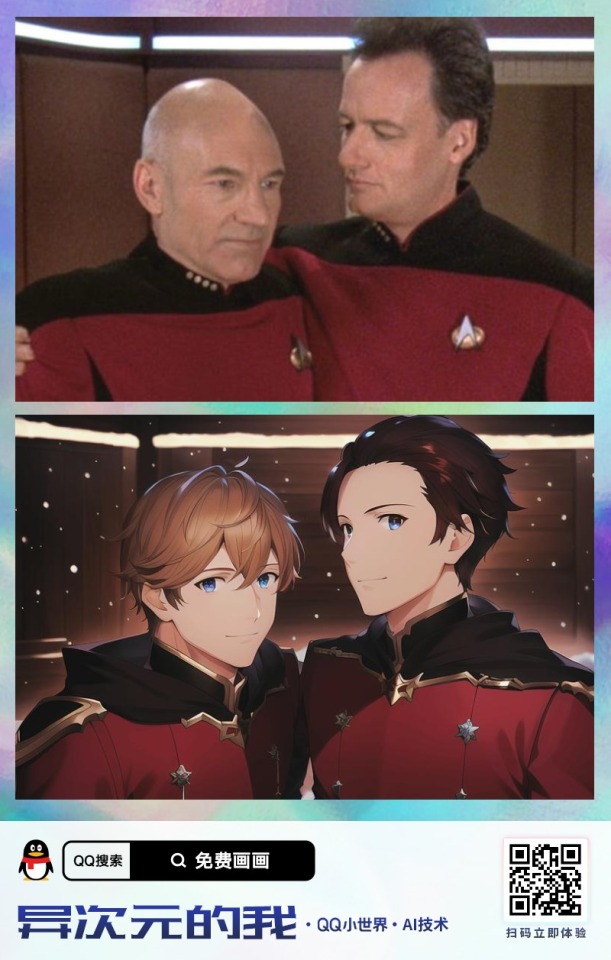

I came across this neural network that turns people photos into anime and here are the results:

Q looks like a typical anime villain but OMG he's hot 🔥

Definitely, this neural network has a problem with the location of the age. And with bald people...

But the Starfleet uniform looks great!✨

*Here is a link to this neural network if you are interested heh*

#q star trek#qcard#john de lancie#patrick stewart#captain picard#star trek#star trek the next generation#star trek tng#neural networks

67 notes

·

View notes

Text

Милое привидение в лесу. Cute ghost in the forest.

#нейросеть#привидение#луна#звезды#лес#грибы#stability.ai#digital art#art#ai artwork#aiart#ai art#generative#neural networks#generated artwork#ai generated art#ai generated images#generative art#dream#photoshop#moon art#ghost#stars#forest#trees and forest#mushrooms#my ai art#my digital edit

37 notes

·

View notes