#code is speech

Text

"Open" "AI" isn’t

Tomorrow (19 Aug), I'm appearing at the San Diego Union-Tribune Festival of Books. I'm on a 2:30PM panel called "Return From Retirement," followed by a signing:

https://www.sandiegouniontribune.com/festivalofbooks

The crybabies who freak out about The Communist Manifesto appearing on university curriculum clearly never read it – chapter one is basically a long hymn to capitalism's flexibility and inventiveness, its ability to change form and adapt itself to everything the world throws at it and come out on top:

https://www.marxists.org/archive/marx/works/1848/communist-manifesto/ch01.htm#007

Today, leftists signal this protean capacity of capital with the -washing suffix: greenwashing, genderwashing, queerwashing, wokewashing – all the ways capital cloaks itself in liberatory, progressive values, while still serving as a force for extraction, exploitation, and political corruption.

A smart capitalist is someone who, sensing the outrage at a world run by 150 old white guys in boardrooms, proposes replacing half of them with women, queers, and people of color. This is a superficial maneuver, sure, but it's an incredibly effective one.

In "Open (For Business): Big Tech, Concentrated Power, and the Political Economy of Open AI," a new working paper, Meredith Whittaker, David Gray Widder and Sarah B Myers document a new kind of -washing: openwashing:

https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4543807

Openwashing is the trick that large "AI" companies use to evade regulation and neutralizing critics, by casting themselves as forces of ethical capitalism, committed to the virtue of openness. No one should be surprised to learn that the products of the "open" wing of an industry whose products are neither "artificial," nor "intelligent," are also not "open." Every word AI huxters say is a lie; including "and," and "the."

So what work does the "open" in "open AI" do? "Open" here is supposed to invoke the "open" in "open source," a movement that emphasizes a software development methodology that promotes code transparency, reusability and extensibility, which are three important virtues.

But "open source" itself is an offshoot of a more foundational movement, the Free Software movement, whose goal is to promote freedom, and whose method is openness. The point of software freedom was technological self-determination, the right of technology users to decide not just what their technology does, but who it does it to and who it does it for:

https://locusmag.com/2022/01/cory-doctorow-science-fiction-is-a-luddite-literature/

The open source split from free software was ostensibly driven by the need to reassure investors and businesspeople so they would join the movement. The "free" in free software is (deliberately) ambiguous, a bit of wordplay that sometimes misleads people into thinking it means "Free as in Beer" when really it means "Free as in Speech" (in Romance languages, these distinctions are captured by translating "free" as "libre" rather than "gratis").

The idea behind open source was to rebrand free software in a less ambiguous – and more instrumental – package that stressed cost-savings and software quality, as well as "ecosystem benefits" from a co-operative form of development that recruited tinkerers, independents, and rivals to contribute to a robust infrastructural commons.

But "open" doesn't merely resolve the linguistic ambiguity of libre vs gratis – it does so by removing the "liberty" from "libre," the "freedom" from "free." "Open" changes the pole-star that movement participants follow as they set their course. Rather than asking "Which course of action makes us more free?" they ask, "Which course of action makes our software better?"

Thus, by dribs and drabs, the freedom leeches out of openness. Today's tech giants have mobilized "open" to create a two-tier system: the largest tech firms enjoy broad freedom themselves – they alone get to decide how their software stack is configured. But for all of us who rely on that (increasingly unavoidable) software stack, all we have is "open": the ability to peer inside that software and see how it works, and perhaps suggest improvements to it:

https://www.youtube.com/watch?v=vBknF2yUZZ8

In the Big Tech internet, it's freedom for them, openness for us. "Openness" – transparency, reusability and extensibility – is valuable, but it shouldn't be mistaken for technological self-determination. As the tech sector becomes ever-more concentrated, the limits of openness become more apparent.

But even by those standards, the openness of "open AI" is thin gruel indeed (that goes triple for the company that calls itself "OpenAI," which is a particularly egregious openwasher).

The paper's authors start by suggesting that the "open" in "open AI" is meant to imply that an "open AI" can be scratch-built by competitors (or even hobbyists), but that this isn't true. Not only is the material that "open AI" companies publish insufficient for reproducing their products, even if those gaps were plugged, the resource burden required to do so is so intense that only the largest companies could do so.

Beyond this, the "open" parts of "open AI" are insufficient for achieving the other claimed benefits of "open AI": they don't promote auditing, or safety, or competition. Indeed, they often cut against these goals.

"Open AI" is a wordgame that exploits the malleability of "open," but also the ambiguity of the term "AI": "a grab bag of approaches, not… a technical term of art, but more … marketing and a signifier of aspirations." Hitching this vague term to "open" creates all kinds of bait-and-switch opportunities.

That's how you get Meta claiming that LLaMa2 is "open source," despite being licensed in a way that is absolutely incompatible with any widely accepted definition of the term:

https://blog.opensource.org/metas-llama-2-license-is-not-open-source/

LLaMa-2 is a particularly egregious openwashing example, but there are plenty of other ways that "open" is misleadingly applied to AI: sometimes it means you can see the source code, sometimes that you can see the training data, and sometimes that you can tune a model, all to different degrees, alone and in combination.

But even the most "open" systems can't be independently replicated, due to raw computing requirements. This isn't the fault of the AI industry – the computational intensity is a fact, not a choice – but when the AI industry claims that "open" will "democratize" AI, they are hiding the ball. People who hear these "democratization" claims (especially policymakers) are thinking about entrepreneurial kids in garages, but unless these kids have access to multi-billion-dollar data centers, they can't be "disruptors" who topple tech giants with cool new ideas. At best, they can hope to pay rent to those giants for access to their compute grids, in order to create products and services at the margin that rely on existing products, rather than displacing them.

The "open" story, with its claims of democratization, is an especially important one in the context of regulation. In Europe, where a variety of AI regulations have been proposed, the AI industry has co-opted the open source movement's hard-won narrative battles about the harms of ill-considered regulation.

For open source (and free software) advocates, many tech regulations aimed at taming large, abusive companies – such as requirements to surveil and control users to extinguish toxic behavior – wreak collateral damage on the free, open, user-centric systems that we see as superior alternatives to Big Tech. This leads to the paradoxical effect of passing regulation to "punish" Big Tech that end up simply shaving an infinitesimal percentage off the giants' profits, while destroying the small co-ops, nonprofits and startups before they can grow to be a viable alternative.

The years-long fight to get regulators to understand this risk has been waged by principled actors working for subsistence nonprofit wages or for free, and now the AI industry is capitalizing on lawmakers' hard-won consideration for collateral damage by claiming to be "open AI" and thus vulnerable to overbroad regulation.

But the "open" projects that lawmakers have been coached to value are precious because they deliver a level playing field, competition, innovation and democratization – all things that "open AI" fails to deliver. The regulations the AI industry is fighting also don't necessarily implicate the speech implications that are core to protecting free software:

https://www.eff.org/deeplinks/2015/04/remembering-case-established-code-speech

Just think about LLaMa-2. You can download it for free, along with the model weights it relies on – but not detailed specs for the data that was used in its training. And the source-code is licensed under a homebrewed license cooked up by Meta's lawyers, a license that only glancingly resembles anything from the Open Source Definition:

https://opensource.org/osd/

Core to Big Tech companies' "open AI" offerings are tools, like Meta's PyTorch and Google's TensorFlow. These tools are indeed "open source," licensed under real OSS terms. But they are designed and maintained by the companies that sponsor them, and optimize for the proprietary back-ends each company offers in its own cloud. When programmers train themselves to develop in these environments, they are gaining expertise in adding value to a monopolist's ecosystem, locking themselves in with their own expertise. This a classic example of software freedom for tech giants and open source for the rest of us.

One way to understand how "open" can produce a lock-in that "free" might prevent is to think of Android: Android is an open platform in the sense that its sourcecode is freely licensed, but the existence of Android doesn't make it any easier to challenge the mobile OS duopoly with a new mobile OS; nor does it make it easier to switch from Android to iOS and vice versa.

Another example: MongoDB, a free/open database tool that was adopted by Amazon, which subsequently forked the codebase and tuning it to work on their proprietary cloud infrastructure.

The value of open tooling as a stickytrap for creating a pool of developers who end up as sharecroppers who are glued to a specific company's closed infrastructure is well-understood and openly acknowledged by "open AI" companies. Zuckerberg boasts about how PyTorch ropes developers into Meta's stack, "when there are opportunities to make integrations with products, [so] it’s much easier to make sure that developers and other folks are compatible with the things that we need in the way that our systems work."

Tooling is a relatively obscure issue, primarily debated by developers. A much broader debate has raged over training data – how it is acquired, labeled, sorted and used. Many of the biggest "open AI" companies are totally opaque when it comes to training data. Google and OpenAI won't even say how many pieces of data went into their models' training – let alone which data they used.

Other "open AI" companies use publicly available datasets like the Pile and CommonCrawl. But you can't replicate their models by shoveling these datasets into an algorithm. Each one has to be groomed – labeled, sorted, de-duplicated, and otherwise filtered. Many "open" models merge these datasets with other, proprietary sets, in varying (and secret) proportions.

Quality filtering and labeling for training data is incredibly expensive and labor-intensive, and involves some of the most exploitative and traumatizing clickwork in the world, as poorly paid workers in the Global South make pennies for reviewing data that includes graphic violence, rape, and gore.

Not only is the product of this "data pipeline" kept a secret by "open" companies, the very nature of the pipeline is likewise cloaked in mystery, in order to obscure the exploitative labor relations it embodies (the joke that "AI" stands for "absent Indians" comes out of the South Asian clickwork industry).

The most common "open" in "open AI" is a model that arrives built and trained, which is "open" in the sense that end-users can "fine-tune" it – usually while running it on the manufacturer's own proprietary cloud hardware, under that company's supervision and surveillance. These tunable models are undocumented blobs, not the rigorously peer-reviewed transparent tools celebrated by the open source movement.

If "open" was a way to transform "free software" from an ethical proposition to an efficient methodology for developing high-quality software; then "open AI" is a way to transform "open source" into a rent-extracting black box.

Some "open AI" has slipped out of the corporate silo. Meta's LLaMa was leaked by early testers, republished on 4chan, and is now in the wild. Some exciting stuff has emerged from this, but despite this work happening outside of Meta's control, it is not without benefits to Meta. As an infamous leaked Google memo explains:

Paradoxically, the one clear winner in all of this is Meta. Because the leaked model was theirs, they have effectively garnered an entire planet's worth of free labor. Since most open source innovation is happening on top of their architecture, there is nothing stopping them from directly incorporating it into their products.

https://www.searchenginejournal.com/leaked-google-memo-admits-defeat-by-open-source-ai/486290/

Thus, "open AI" is best understood as "as free product development" for large, well-capitalized AI companies, conducted by tinkerers who will not be able to escape these giants' proprietary compute silos and opaque training corpuses, and whose work product is guaranteed to be compatible with the giants' own systems.

The instrumental story about the virtues of "open" often invoke auditability: the fact that anyone can look at the source code makes it easier for bugs to be identified. But as open source projects have learned the hard way, the fact that anyone can audit your widely used, high-stakes code doesn't mean that anyone will.

The Heartbleed vulnerability in OpenSSL was a wake-up call for the open source movement – a bug that endangered every secure webserver connection in the world, which had hidden in plain sight for years. The result was an admirable and successful effort to build institutions whose job it is to actually make use of open source transparency to conduct regular, deep, systemic audits.

In other words, "open" is a necessary, but insufficient, precondition for auditing. But when the "open AI" movement touts its "safety" thanks to its "auditability," it fails to describe any steps it is taking to replicate these auditing institutions – how they'll be constituted, funded and directed. The story starts and ends with "transparency" and then makes the unjustifiable leap to "safety," without any intermediate steps about how the one will turn into the other.

It's a Magic Underpants Gnome story, in other words:

Step One: Transparency

Step Two: ??

Step Three: Safety

https://www.youtube.com/watch?v=a5ih_TQWqCA

Meanwhile, OpenAI itself has gone on record as objecting to "burdensome mechanisms like licenses or audits" as an impediment to "innovation" – all the while arguing that these "burdensome mechanisms" should be mandatory for rival offerings that are more advanced than its own. To call this a "transparent ruse" is to do violence to good, hardworking transparent ruses all the world over:

https://openai.com/blog/governance-of-superintelligence

Some "open AI" is much more open than the industry dominating offerings. There's EleutherAI, a donor-supported nonprofit whose model comes with documentation and code, licensed Apache 2.0. There are also some smaller academic offerings: Vicuna (UCSD/CMU/Berkeley); Koala (Berkeley) and Alpaca (Stanford).

These are indeed more open (though Alpaca – which ran on a laptop – had to be withdrawn because it "hallucinated" so profusely). But to the extent that the "open AI" movement invokes (or cares about) these projects, it is in order to brandish them before hostile policymakers and say, "Won't someone please think of the academics?" These are the poster children for proposals like exempting AI from antitrust enforcement, but they're not significant players in the "open AI" industry, nor are they likely to be for so long as the largest companies are running the show:

https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4493900

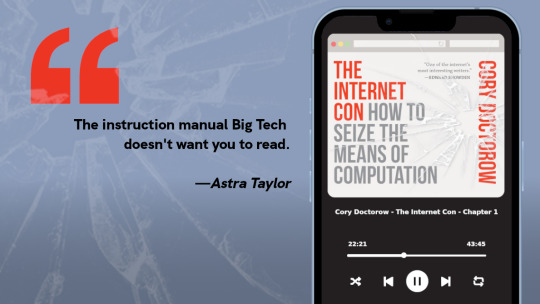

I'm kickstarting the audiobook for "The Internet Con: How To Seize the Means of Computation," a Big Tech disassembly manual to disenshittify the web and make a new, good internet to succeed the old, good internet. It's a DRM-free book, which means Audible won't carry it, so this crowdfunder is essential. Back now to get the audio, Verso hardcover and ebook:

http://seizethemeansofcomputation.org

If you'd like an essay-formatted version of this post to read or share, here's a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2023/08/18/openwashing/#you-keep-using-that-word-i-do-not-think-it-means-what-you-think-it-means

Image:

Cryteria (modified)

https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0

https://creativecommons.org/licenses/by/3.0/deed.en

#pluralistic#llama-2#meta#openwashing#floss#free software#open ai#open source#osi#open source initiative#osd#open source definition#code is speech

250 notes

·

View notes

Text

I love how Spider-Man: Into the Spider-Verse said “Anyone can be Spider-Man”. I love how it inspired everyone to imagine their own Spider-People, saving the day in their own universes, with all kinds of cool, interesting personalities and aesthetics and mutations and life stories and relationships. We all put pieces of our soul into these homemade heroes. We had fun. We found community. And then Spider-Man: Across the Spider-Verse said, “Wow, great job! You’ve really taken our message to heart. Well, get ready for even more of everything you liked from the first movie and a new message to complement the first. Anyone can be Spider-Man… and anyone can be pulled into a cult.”

So now we all have to contemplate whether our lovingly crafted heroes would ever be on Team Mandatory Trauma Because Martyr Complex or not and why.

#i for one made a second spider-person (diving belle) right after watching atsv#who IS on team mandatory trauma for reasons that make sense given her moral code and its context#her world has eldritch horror elements that give some of her enemies a sharper edge#and she has had to use lethal force#in a ‘stays true to her strict morality based around compassion nonetheless’ way like wonder woman#she’s used to tough utilitarian decisions#she’s learned that no hero can save everyone from intensely traumatic brushes with mind-breaking corruptive power#so when miguel gave his big speech she was ‘yeah okay. i get it. sometimes shit just happens’#it was actually very enjoyable going through the character creation process#from the starting point of ‘WOULD chase miles and restrain him by force’#and then working toward ‘otherwise a genuinely good person and superhero who could be the star of her own series’#atsv#spider man across the spider verse#across the spiderverse#spider-verse#smatsv#sm: atsv#spiderverse#spidersona

300 notes

·

View notes

Text

The way skk can basically talk withojut talking makes the whole mersault thing so hilarious because even though fyodor was trying to play off as he was controlling chuuya (???) U know he felt like they were third wheeling him. Hes like heh your shallow bond meanwhile they're laughing together at an inside joke from 5 years ago without even speaking or reacting

#Dazai basicaly was smiling the entire time even though he was 'in immense pain'#like ok..........#And u know fyodor was getting fomo#THEY WERE SPEAKING IN CODE THEOUGH THE SPEECHES#skk#soukoku#dazai osamu#nakahara chuuya#bsd#bungou stray dogs

512 notes

·

View notes

Text

Instead of saying "I want to kill myself" I now say "I'm going to violate the Hays Code." "I'm going to violate YouTube terms of service." Or "I'm going to violate the comics code." And I hate realizing all three of those mean the same thing. Progress is moving backwards in this society.

#anti censorship#anti capitalist#anti capitalism#196#my thougts#free speech#leftist#left#leftism#youtube#hays code#comics code authority

170 notes

·

View notes

Text

Imagine how many people JUST LIKE HER are in ICU, TRAUMA, BIRTH AND DELIVERY, NICU, STEP-DOWN UNITS, PYSCH WARDS, ELDERLY CARE, OBGYN, CARDIOLOGY, POST OP CARE, etc…

#indiana state university#protest#racism#hate speech#campus activism#cowboy carter#beyoncé#country music#yik yak#student activism#campus response#discrimination#protest demands#campus culture#university response#social media#campus newspaper#diversity#inclusion#racial discrimination#Code of Conduct#university administration#health care disparities#Black maternal health#structural racism#health care professionals#systemic inequities

56 notes

·

View notes

Text

Come gather 'round people. Wherever you roam. And admit that the waters. Around you have grown. And accept it that soon. You'll be drenched to the bone. If your time to you is worth savin'. And you better start swimmin'. Or you'll sink like a stone. For the times they are a-changin'

-the times they are a-changin', bob dylan

#ofmd#our flag means death#ofmd s2#ofmd s2 spoilers#izzy hands#im SO please rn#and the use of this song INSPIRED#it also really mirrors what Izzy's saying in his last speech here to the prince too really beautiful it's disgusting (affectionate)#pirate show viewers when there's narrative parallels 🤯#mine#god he's so martyr coded its ugh

101 notes

·

View notes

Text

how do you spot a fascist? well first it's important to do numerology to them. if any of their sentences are 14 words long that's facism. If that fails, it's important to misapply one or two of the immortal principles of umberto eco. probably the one about the enemy being simultaneously strong and weak. it is very crucial throughout the whole process not to let discussion of their actions or policies enter into the conversation, as then we might actually learn what fascism is

#if you are looking for coded hh messages in netenyahu's speeches i would suggest u don't understand much about. israel.#blacklist

77 notes

·

View notes

Text

the way his words are so carefully curated, not only for the sake of precision, but so it fits a rhythm that is breathable for a slow speaker.

to say exactly what he means in a short sentence, with recurring pauses and a clear pronounciation. to prefer periods(.) over commas(,).

not to be needlessly verbose – much the opposite; because this vocabulary serves the purpose of clarity, organization and to hold the attention of the listener.

#talking to the wall#wd gaster#gaster#undertale#deltarune#and also because he's toothless so it takes an extra effort to make words sound correctly ahahaha (a headcanon)#another day of speech patterns being very important to me in terms of characterization#otherwise i will have trouble imagining it is this character saying a line#if we had canon wingdings read some fanfiction lines of his out loud we would sleep on the spot#and that is why these mannerisms are so important when fleshing him out!#there is just not enough fanfiction giving it enough attention to portray this OTL#to speak little yet say a lot#i like to imagine he is a very visual thinker and it adds to the thoughtfulness of his words#also re: wingdings as a visual code.#man who speaks in hands and whatnot#wistful sighing. damn papyrus you had it good when he would read you bedtime stories#this guy writes in hieroglyphs

50 notes

·

View notes

Text

i still think its a little bit insane that gira was destined by BLOOD to KILL jeramie but instead chose to deny his own fate and fight dugded with jeramie by his side instead

#kingohger#ohsama sentai kingohger#king ohger#giramie#gira hastie#gira husty#jeramie brasieri#the way the show glosses over this like yeah the hasties have been doing this for years yeah dugded said all this In Front of Jeramie#also i like to think this is where jeramie RLLY realizes hes in love with gira. his speech abt dictating his own fate and#showing that hes really ready to risk it all for the sake of jeramies kind/bugnarak and the peace of chikyuu..#jeramie's “thats more like it/there you go” when gira smirked here was so “thats the gira i know and fell in love with” coded#tbh giras actions here probably impacted jeramie a lot and is why jermy similarly “risked it all/threw everything away” by making himself#a villain(in itself also inspired by gira) later on...#kingohger spoilers

95 notes

·

View notes

Text

GUYS I HAVE DRAWN THEM SO MUCH THE PAST FEW DAYS. SO MUCH. I HAD TO GO TO MY DADS HOUSE FOR THE WEEKEND TOO BUT THAT DIDN'T STOP ME I SCAVENGED THAT HOUSE FOR PAPER TO DRAW ON. I HAVE SO MANY DRAWINGS AND DOODLES OF THEM IM SO SANE.

#anyway i love incorporate seths quiet speech into stuff#furroughs#mdarc#yakou furio#seth burroughs#mda:rc#raincode#rain code#rain code fanart#seth x yakou#yakou x seth#seth burroughs x yakou furio#yakou furio x seth burroughs#master detective archives#master detective archives: rain code#my art#wiki art

120 notes

·

View notes

Text

Don't assume I want to have a threesome with you because I'm bi.

It's actually because I'm slutty.

#river rambles#listen I'm in a good mood#this is going to get Dean Winchester speech bubbled isn’t it?#tbh this is a way I am dean coded so its fine

39 notes

·

View notes

Text

was rewatching the pilot again yesterday for fic reasons and thinking again about the sherlock-style screen annotations they had when barry was doing CSI work that they literally only did in the first ep and then never revisited again, presumably because they realized it'd be far too much effort to work out the details on such a precise level

and thinking about like. that barry allen with the hyper-precise exact measurements that he did by eye (with joe shaking his head in awe so you know that he's a CSI supergenius) vs. the leonard snart who timed his heists to the exact nanosecond (which again, presuming they ditched because it's a logistical nightmare to write dialogue that nitpicky and obsessive, and would be such a fucking pain to do on a week-to-week basis). like. yet another reason they are soulmates tbh. is audhd4autistic a thing the same way t4t is a thing? if it isn't then i'm making it a thing

#never noticed it before i became obsessed w autism but pilot barry is SCREAMING “stereotypical tv depiction of white male autistic savant”#like even the cadence of his speech and the level of clumsiness and social awkwardness was ramped up to an 11 in the pilot#literally i only watched half the ep and he accidentally bumped into like 4 people.#like... the lack of spatial awareness... he's so me. they really did go “the speedforce cured his appalling proprioception"#part of me is glad they dialled some of it back cos like. tv loooves to code characters as autistic in that very specific#way that's like. a big old stereotype. but then be like “wdym you interpret him as autistic. disgusting that you'd say that. die.”#but idk i also kinda liked it... again im ultimately glad they didn't stick w the sherlock-style annotation bc it would make writing casefi#SO much more difficult than it already is just in terms of like. how do you show that kind of thought process in a non-visual medium#in a way that's not incredibly boring and info-dumpy?#but i do have a soft spot for like. early seasons disaster barry allen who can't walk across a flat surface without crashing into something#and has no idea how to have a normal human conversation#my meta

46 notes

·

View notes

Photo

Look at the mouth on him!

#halbrand has maxed out his speech skill or is running on tgm cheat code! 8D#the rings of power#rings of power#halbrand#trop#rop#tropedit#ropedit#charlie vickers#lotr amazon#lotr on prime#my gifs#a subterranean gif

907 notes

·

View notes

Photo

top 10 the rookie relationships (as voted by the fandom):

#4. angela lopez and wesley evers

“You all think that you're pure evil and willing to do whatever your master asks, but you don't know anything about Angela or me. If you hurt her, I will call in every favor I am owed, and there's a lot. Rabid animals who will burn you to your component molecules while you beg me for mercy.”

#the rookie#therookieedit#wopez#wopezedit#angela x wesley#*#mine: gif#mine: the rookie#meme: top 10 the rookie relationships#MY LOVES#wesleys speech is so tim bradford about lucy chen coded

590 notes

·

View notes

Note

:3

he does look like it, doesn't he

#be more chill#bmc#happi's art#the sillies#gods ive forgotten how to draw michael#still trying to figure out different nose shapes w/ this new nose style#also ignore how the speech bubbles are a little out of order gah#anyways. the sillies.#jeremy is so weed cat coded fr

72 notes

·

View notes

Text

Biting the bars of my enclosure about autistic ford tonight. There's something about him using vocabulary and turns of phrase that seem "outdated" or "pretentious" that feels so painfully genuine to me. When people say he talks like that just to "try to sound smart" I wish I could explain what it's like to be so ostracized from your peers growing up that you spend all your time reading instead, to the point where you pick up your way of speaking from books instead of from people. And then what it's like for people to call you out for "talking weird" over and over again, not able to wrap their heads around why the fuck you would choose more archaic or technical or formal words than the simpler ones that surely come to everyone's minds first. What it's like to have to dedicate a sizable chunk of attention to filtering through every single word you say out loud in real time before you say it, to make absolutely sure that it isn't a word people will judge you for using or make fun of you for using, just so you'll have a chance of being taken seriously. Learning through trial and error how to filter out the words that other people don't think are normal or casual enough for the conversation, even though for you, the word choice that's "natural-sounding" enough for them is the third or fourth word you came up with when searching for the right way to phrase something in your head. I wish I could explain just how long it takes to say fucking anything after spending a lifetime doing that during every single conversation, and how repetitive and long-winded you end up being when you spend so long coming up with alternative ways of saying every little thing you ever think. And I wish people realized that, at the very least for autistic people and autistic-coded characters, speech that's seen as pretentious is really just the way they talk when they're not putting in the extra effort to filter through every word they say just so others will take the time to listen.

#ford meta#actuallyautistic#everyone go read the wikipedia page for 'stilted speech' right now#long post#ford isnt very good at masking. he doesn't have the kind of (unintentional) autistic coding that is Palatable To Neurotypicals.#definitely looking-too-deeply-at-a-kid-cartoon right now but in *some* ways. a world where the majority of people think its easy to like an#-understand ford is a world that would feel safe for me to unmask in.#i truly truly hate that fully explaining my thoughts on ford requires me to say so much about myself. but god is it such a crime-#-to use a fictional character as a lens through which to try and explain to people how to be more understanding and accepting-#-of things like this.#making fun of stilted speech is so normalized that people don't even realize they're making fun of someone for being weird.#people think its Someone Thinking They're Better Than You but its something people lay awake at night wishing they could stop doing.#and yet they still end up using the Wrong Words and being labeled a Pretentious Asshole just for talking differently than the norm.#maybe there really are people out there who deliberately use big words to try and sound smarter than everyone else. I don't know.#all I know is. in a world where its pretty obvious that people who use a discongruently complex vocabulary get made fun of for doing that.#why would someone deliberately trying to impress people do something that would only get them laughed at.#sorry for being genuine on main. as if its my fault </3

75 notes

·

View notes