#Neural networks

Text

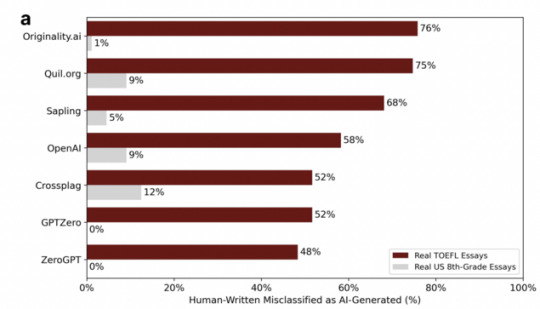

Nobody should be using GPT detectors for anything important.

This is from a recent study that found that GPT detectors were misclassifying writing by non-native English speakers as AI-generated 48-76% of the time (!!!), compared to 0%-12% for native speakers.

It is irresponsible to use AI-generated text detectors as evidence of academic misconduct, and that's putting it mildly.

#neural networks#gpt detector#bias#bias against non-native speakers#false positive#not only is it often just wrong#but it's wrong in a way that's biased against non-native speakers#stop using gpt detectors to detect cheating#stop it#feel free to print this out and hang it up at school

15K notes

·

View notes

Text

One of the perennial problems with deep learning models like DALL-E is that if you train them too well, eventually they start precisely reproducing material from their training data set that just happens to match whatever criteria they’re given.

Given that these models are a. trained on random images scraped in bulk from the Internet, largely without human curation, and b. being touted as a potential substitute for human artists in certain commercial applications, I’m just waiting for the inevitable lawsuit where one of these models spits out an exact copy of some reasonably well-known piece of art, that copy is used in a commercial publication whose author is unaware of what the model has done, and some poor judge has to rule on whether an AI can commit plagiarism.

#computers#technology#ai#neural networks#dall-e#art#law#intellectual property#plagiarism#the stupidest cyberpunk future

3K notes

·

View notes

Text

On the one hand, people who take a hardline stance on “AI art is not art” are clearly saying something naïve and indefensible (as though any process cannot be used to make art? as though artistry cannot still be involved in the set-up of the parameters and the choice of data set and the framing of the result? as though “AI” means any one thing? you’re going to have a real hard time with process music, poetry cut-up methods, &c.).

But all of this (as well as takes that what's really needed is a crackdown on IP) are a distraction from a vital issue—namely that this is technology used to create and sort enormous databases of images, and the uses to which this technology is put in a police state are obvious: it's used in service of surveillance, incarceration, criminalisation, and the furthering of violence against criminalised people.

Of course we've long known that datasets are not "neutral" and that racist data will provide racist outcomes, and we've long known that the problem goes beyond the datasets (even carefully vetting datasets does not necessarily control for social factors). With regards to "predictive policing," this suggests that criminalisation of supposed leftist "radicals" and racialised people (and the concepts creating these two groups overlap significantly; [link 1], [link 2]) is not a problem, but intentional—a process is built so that it always finds people "suspicious" or "guilty," but because it is based on an "algorithm" or "machine learning" or so-called "AI" (processes that people tend to understand murkily, if at all), they can be presented as innocent and neutral. These are things that have been brought up repeatedly with regards to "automatic" processes and things that trawl the web to produce large datasets in the recent past (e.g. facial recognition technology), so their almost complete absence from the discourse wrt "AI art" confuses me.

Abeba Birhane's thread here, summarizing this paper (h/t @thingsthatmakeyouacey) explains how the LAION-400M dataset was sourced/created, how it is filtered, and how images are retrieved from it (for this reason it's a good beginner explanation of what large-scale datasets and large neural networks are 'doing'). She goes into how racist, misogynistic, and sexually violent content is returned (and racist mis-categorisations are made) as a result of every one of those processes. She also brings up issues of privacy, how individuals' data is stored in datasets (even after the individual deletes it from where it was originally posted), and how it may be stored associated with metadata which the poster did not intend to make public. This paper (h/t thingsthatmakeyouacey [link]) looks at the ImageNet-ILSVRC-2012 dataset to discuss "the landscape of harm and threats both the society at large and individuals face due to uncritical and ill-considered dataset curation practices" including the inclusion of non-consensual pornography in the dataset.

Of course (again) this is nothing that hasn't already been happening with large social media websites or with "big data" (Birhane notes that "On the one hand LAION-400M has opened a door that allows us to get a glimpse into the world of large scale datasets; these kinds of datasets remain hidden inside BigTech corps"). And there's no un-creating the technology behind this—resistance will have to be directed towards demolishing the police / carceral / imperial state as a whole. But all criticism of "AI" art can't be dismissed as always revolving around an anti-intellectual lack of knowledge of art history or else a reactionary desire to strengthen IP law (as though that would ever benefit small creators at the expense of large corporations...).

835 notes

·

View notes

Text

Поздравляю всех с праздником любви и нежности, с Днем святого Валентина! Пусть любовь всегда будет чиста, верна и преданна. Пусть ваши половинки будет всегда рядом, оберегают от всех невзгод и неурядиц. Пусть ваши чувства будут теплыми и крепкими, страстными и взаимными.

Congratulations to everyone on the holiday of love and tenderness, Happy Valentine's Day! May love always be pure, faithful and devoted. Let your other halves always be there, protect you from all adversity and troubles. Let your feelings be warm and strong, passionate and mutual.

Source: poems taken from the Internet.

#русский tumblr#neural networks#wombo art#art#digital art#my digital edit#fantasy art#digital drawing#photoshop#holiday#Happy Valentine's Day#14 february#floral#flowers#hearts#clipart#нейросеть#праздник#CДнем святого Валентина#14 февраля#цветы#фантазия#сердечки#клипарт

130 notes

·

View notes

Text

I love you as certain dark things are to be loved, in secret, between the shadow and the soul.

PABLO NERUDA

One Hundred Love Sonnets: XVII

305 notes

·

View notes

Text

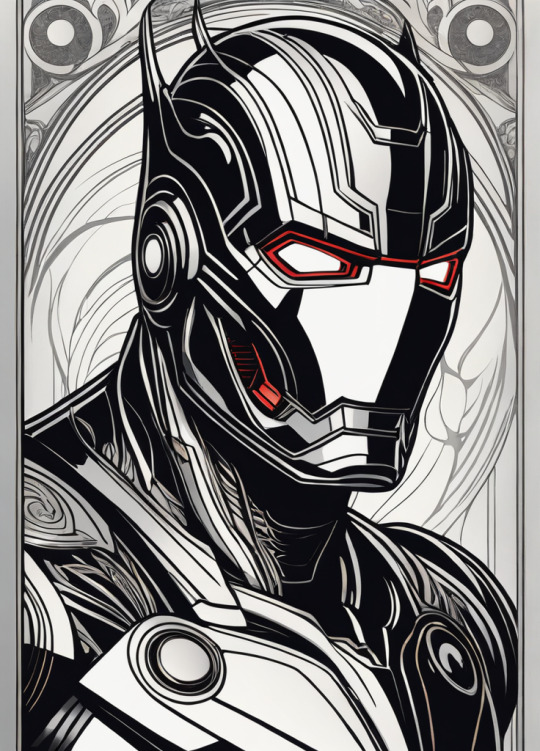

Hela

Ultron

Mysterio

Venom

Doctor Doom

Red Skull

Green Goblin

Model: Dreamshaper XL 1.0

#art#ai artwork#ai art#aiart#stable diffusion#stability ai#StableDiffusion#ai generated art#generative art#neural networks#ai generated images#generative ai#generative#marvel#marvel villains#hela#ultron#mysterio#venom#doctordoom#redskull#greengoblin

83 notes

·

View notes

Text

Кои освещенные солнцем. Koi carp are illuminated by the sun.

#нейросеть#пруд#кои#лотосы#лучи#digital art#art#ai artwork#ai art#neural networks#generated artwork#generative art#dream#photoshop#wombo art#koi carp#sun rays#lotuses#pond#my ai art#my digital edit#illustrators on Tumblr#ai art gallery

80 notes

·

View notes

Text

Original

It had generated a complete reference with title, author, and everything. Completely fabricated.

It’s not a search engine, it’s a crappy imitation of one.

16K notes

·

View notes

Photo

Cute Pinups (workflow in comments)

Give us a follow on Twitter: @StableDiffusion

h/t itsnotrealx

#ai art#art#aiart#StableDiffusion#digital art#ai artwork#stability ai#stable diffusion#dall e ai#dallemini#ai generated#digital painting#deep learning#ai generated art#neural networks#deep dream#generative#generated artwork

154 notes

·

View notes

Text

Detecting AI-generated research papers through "tortured phrases"

So, a recent paper found and discusses a new way to figure out if a "research paper" is, in fact, phony AI-generated nonsense. How, you may ask? The same way teachers and professors detect if you just copied your paper from online and threw a thesaurus at it!

It looks for “tortured phrases”; that is, phrases which resemble standard field-specific jargon, but seemingly mangled by a thesaurus. Here's some examples (transcript below the cut):

profound neural organization - deep neural network

(fake | counterfeit) neural organization - artificial neural network

versatile organization - mobile network

organization (ambush | assault) - network attack

organization association - network connection

(enormous | huge | immense | colossal) information - big data

information (stockroom | distribution center) - data warehouse

(counterfeit | human-made) consciousness - artificial intelligence (AI)

elite figuring - high performance computing

haze figuring - fog/mist/cloud computing

designs preparing unit - graphics processing unit (GPU)

focal preparing unit - central processing unit (CPU)

work process motor - workflow engine

facial acknowledgement - face recognition

discourse acknowledgement - voice recognition

mean square (mistake | blunder) - mean square error

mean (outright | supreme) (mistake | blunder) - mean absolute error

(motion | flag | indicator | sign | signal) to (clamor | commotion | noise) - signal to noise

worldwide parameters - global parameters

(arbitrary | irregular) get right of passage to - random access

(arbitrary | irregular) (backwoods | timberland | lush territory) - random forest

(arbitrary | irregular) esteem - random value

subterranean insect (state | province | area | region | settlement) - ant colony

underground creepy crawly (state | province | area | region | settlement) - ant colony

leftover vitality - remaining energy

territorial normal vitality - local average energy

motor vitality - kinetic energy

(credulous | innocent | gullible) Bayes - naïve Bayes

individual computerized collaborator - personal digital assistant (PDA)

86 notes

·

View notes

Text

Thought: we shouldn't be calling all these "AI" things Artificial Intelligence.

Instead, I propose we use the term "Algorithmic Generators", or "AG" for short, for these types of things.

Because that better explains what they actually are, and also doesn't incorrectly peg them as "intelligent" or cause confusion about what AI actually mean anymore.

106 notes

·

View notes

Text

Controversial opinion but i think we need to give neural nets physical bodies. Not because i think they’re like actually sapient i just think it would be interesting, you know? I’d like to see the almost-person of an ai chatbot given physical form. Like imagine hanging out in person with frank @nostalgebraist-autoresponder

202 notes

·

View notes

Text

This is how the new zoom function in the Midjourney neural network looks like.

94 notes

·

View notes

Text

286 notes

·

View notes