#photoship

Video

Harvard by Treflyn Lloyd-Roberts

Via Flickr:

Built in 1942 by Noorduyn for the Royal Canadian Air Force, FE511 takes to the air for a pleasure flight from White Waltham Airfield. An hour or so later, this was my photoship for an air-to-air sortie with Hurricane R4118. Aircraft: Noorduyn AT-16 Harvard IIb G-CIUW/FE511. Location: White Waltham Airfield, near Maidenhead, Berkshire.

#Built#1942#by#Noorduyn#Royal#Canadian#Air#Force#FE511#pleasure#flight#White#Waltham#Airfield#photoship#air-to-air#sortie#Hurricane#R4118#trainer#RAF#RCAF#WW2#WWII#World#War#2#heritage#aviation#military

2 notes

·

View notes

Text

man everything with photoshop has to be so complicated i hate it

#rambling#i have an idea and i'm like ''okay based on my knowledge of ps this execution should be relatively simple''#and then photoship makes the text orange#and none of the guides account for the fact that you can't change the contrast or the fact that changing the color doesn't get rid of#the orange it just fucks up the colors even more#or the fact that you can't even get rid of any color in the text#and it's like. i KNOW you can do it ps i've seen others do it why are you being so difficult to me

2 notes

·

View notes

Text

i'm actually losing my mind wdym my sister's laptop can't read mp4 files

1 note

·

View note

Note

screenshots you saying "i fucking love tyranny!" and crops out the rest

[cleverly photoships out the 'y' so that im not problematic anymore]

139 notes

·

View notes

Text

German Tornado blasting fire next to the Ironbird Photoship

59 notes

·

View notes

Text

is photoshipping fictional characters onto tumblr posts to represent them talking still cringe?

46 notes

·

View notes

Text

Hey, punch out fans

I found this pic online but I can't for the life of me find the source. The artstyle feels familiar and from what I gather it first popped up around june 13 2019. As far as I can tell, the op of the art has deactivated their account because the art links here- https://66.media.tumblr.com/6fb5c0de728e1105734ada5178189a59/fddb3b5c7355c202-1c/s400x600/01747261a7fe7dc6181be68b1ee41f561015f09c.png

Links to the original would be helpful but an idea on who the artist might be would be good too.

Also if you find it for me I might draw you something/ make something in photoship for you

ETA: Even though the original artist IS here, I want to find the og post still! Any help is wanted!

#punch out#seriously I've been looking for over a week and haven't found anything#every other image I've looked for via blog hunting has turned up results in a reasonable time#excpet this one#so yeah some help would be appreciated

31 notes

·

View notes

Text

can someone sned me the link to that one free in browser photoship

5 notes

·

View notes

Photo

Siderian walking cycles – transverse right-lead canter.

Now also added a texture! I’ve given this Siderian my cat (Oriental shorthair) Nintu’s fur (more about that later). Texture painting in Blender alone is quite a nightmare, so I did most of it in Photoshop but the stencil texturing function in Blender is quite cool.

The tiger whom I referenced had a bit of a funny canter by swinging his front paws forwards in a bit of a nonchalant way. I tried to take that over in the Siderian animation here. I will explain more about canter vs galop in some of the next posts.

This is still my demo v.1, 3D animation attempt 1 but I’m glad with the results so far.

Done in Blender and rendered with cycles (100 samples), 30 frames per second and a rather small image size to save render time and disk space. Turned into a gif with Photoship.

#Siderian#animation#3D animation#walking cycle#canter#CGI#Blender#bigcat#feline#my art#FelisGlacialis#mu#Mu v1#Demo v1

17 notes

·

View notes

Text

I miss when mpreg was poorly photoshipping actors heads on random mommy bloggers bodies ft a soft gassian bur, 😔 what is the world coming to, now it's just ugly AI

1 note

·

View note

Text

sooo fucked up they dont tell you that you can import photoship beushes into clip

2 notes

·

View notes

Text

i want to be photoshopping something. photoshipping an explosion into the background of a picture of saul goodamn driving a car. and i want it to take 8 hours and im listening to a podcast and i post it online and it doesnt get much traction but i was having a good time photo shoping it on my computer and im satisfied anty way.

20 notes

·

View notes

Text

People with photoship skills. Patrick Bateman Barbie poster. Go.

3 notes

·

View notes

Text

A Brief Introduction To Diffusion Models

Demos

Applications

Image Editor: Photoship plugins like

Effects in Shorts https://youtube.com/shorts/uW13BzNcy-k?feature=share

Music generation: Riffusion

Video Generation(very early stage)

Marketing

Creator Marketing tools

And lots went to NSFW(especially with the leaked models from NovalAI which specialized in animation)

Players

OpenAI: DELL-E/DALL-E 2

Google: Imagen: Text-to-Image Diffusion Models

Meta: Make-A-Scene

Microsoft: NUWA-Infinity

Midjourney

And other Stable Diffusiion powered start-ups

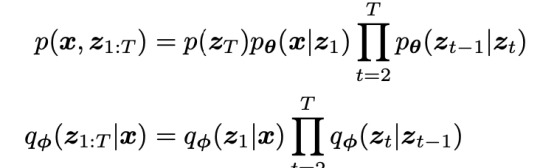

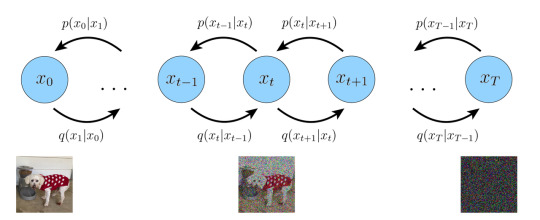

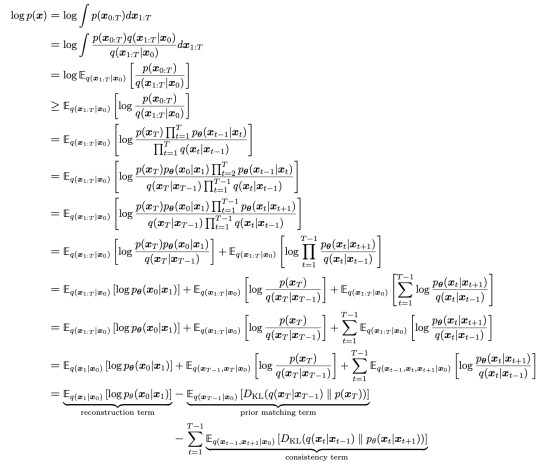

Generating Images: Variational Diffusion Models

What is generation

Given observed samples x from a distribution of interest, the goal of a generative model is to learn to model its true data distribution p(x). Once learned, we can generate new samples from our approximate model at will

Variational Autoencoders

Incorporate latent variables: we can think of the data we observe as represented or generated by an associated unseen latent variable, which we can denote by random variable z.

And how to approximate : Evidence Lower Bound (ELBO)

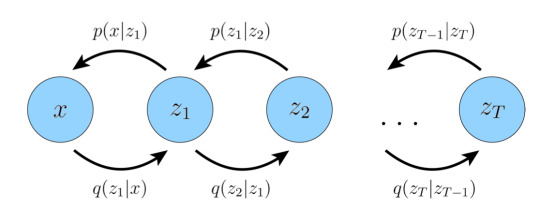

Hierarchical Variational Autoencoders

Variational Diffusion Models

HVAE with Properties

The latent dimension is exactly equal to the data dimension

The structure of the latent encoder at each timestep is not learned; it is pre-defined as a linear Gaussian model. In other words, it is a Gaussian distribution centered around the output of the previous timestep

The Gaussian parameters of the latent encoders vary over time in such a way that the distribution of the latent at final timestep T is a standard Gaussian

Workflow in a nutshell

Three equivalent objectives to optimize a VDM( derived from ELBO in the appendix,reparameterization trick and VDM's properties)

Learning a neural network to predict the original image x0

Learning a neural network to predict the source noise ε0 (empirically, some works have found this resulted in better performance)

Learning a neural network to predict the score of the image at an arbitrary noise level ∇logp(xt)

How it works then

The basic architecture

The learning and inference process

Other details

The weighting of the training obj for different timesteps

Noise schedule

Trilemma and Variants

Advanced Forward Process

Parameterize the diffusion process, like αt

Non-Markovian diffusion process and denoising process

Momentum-based diffusion

Advanced Reverse Process

Conditional GANs

Advanced Models

Progressive Distillation

Stable Diffusion: lowered the cost, made it consumer-level and popular

Generating Images Under Guidance

A naive way is to add the guidance in the reverse process

Image conditioning: channel-wise concatenation

Text conditioning

Single vector embedding: spatial addition / adaptive group norm

Seq of vector embeddings: attention

Caveat: VDM may potentially learn to ignore or downplay any given conditioning information

Guidance : explicitly control the amount of weight the model gives to the conditioning information, at the cost of sample diversity

Classifier Guidance

Classifier-free Guidance

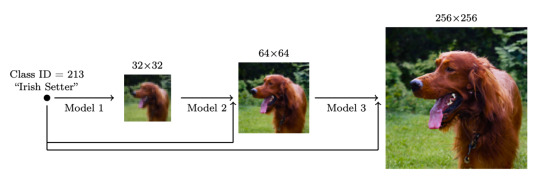

Cascaded Generation

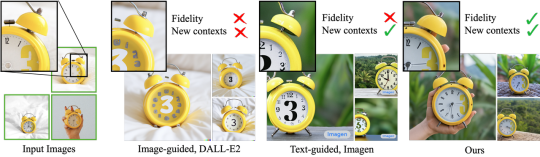

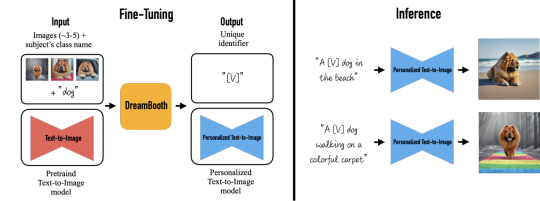

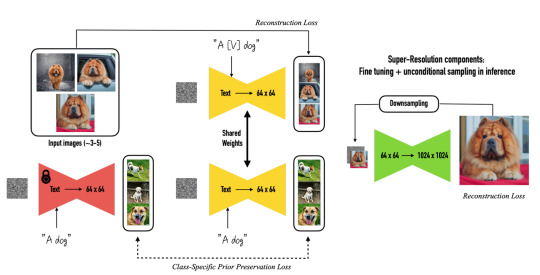

Subject-Driven Generation

DreamBooth

Background

Approach

Results

Appendix

Understanding Diffusion Models- A Unified Perspective.pdf

TACKLING THE GENERATIVE LEARNING TRILEMMA WITH DENOISING DIFFUSION GANS

Tutorial on Denoising Diffusion-based Generative Modeling: Foundations and Applications

What are Diffusion Models?

DreamBooth: Fine Tuning Text-to-Image Diffusion Models for Subject-Driven GenerationA Brief Introduction To Diffusion Models

Frechet inception distance (FID) and Inception Score (IS) for evaluating sample fidelity

For sample diversity, use the improved recall score

For sampling time, we use the number of function evaluations (NFE) and the clock time when generating a batch of 100 images on a V100 GPU.

ELBO of VDM:

2 notes

·

View notes

Note

I don't understand the steps of your coloring tutorial. Photoship will only let me color frame by frame. When I try to follow your steps and color the gif Photshop gives me a circle with a line through it won't let me do anything. How are you getting around that?

hi anon, i'm really sorry but i don't know what you mean - it's incredibly difficult to diagnose what you might be doing wrong when i can't see the process! have you converted your gif to animation (timeline)? this is what i usually find is the root of issues, but again it's hard to say, i'm really sorry but i can't explain something that doesn't happen to me.

2 notes

·

View notes